1: New York University

2: Meta AI

Tl;dr CLIP-Field is a novel weakly supervised approach for learning a semantic robot memory that can respond to natural language queries solely from raw RGB-D and odometry data with no extra human labelling. It combines the image and language understanding capabilites of novel vision-language models (VLMs) like CLIP, large language models like sentence BERT, and open-label object detection models like Detic, and with spatial understanding capabilites of neural radiance field (NeRF) style architectures to build a spatial database that holds semantic information in it.

Abstract ↓

We propose CLIP-Fields, an implicit scene model that can be trained with no direct human supervision. This model learns a mapping from spatial locations to semantic embedding vectors. The mapping can then be used for a variety of tasks, such as segmentation, instance identification, semantic search over space, and view localization. Most importantly, the mapping can be trained with supervision coming only from web-image and web-text trained models such as CLIP, Detic, and Sentence-BERT. When compared to baselines like Mask-RCNN, our method outperforms on few-shot instance identification or semantic segmentation on the HM3D dataset with only a fraction of the examples. Finally, we show that using CLIP-Fields as a scene memory, robots can perform semantic navigation in real-world environments.

Real World Robot Experiments

In these experiments, the robot is navigating the real world environements to "go and look at" the objects that are described by the query, which we expect to make accomplishing many downstream tasks possible, simply from natural language queries.

Robot queries in a real lab kitchen setup.

Robot queries in a real lounge/library setup.

Interactive Demonstration

In this interactive demo, we show a heatmap of association between environment points and natural language queries made by a trained CLIP-field. Note that this model was trained without any human labels, and none of these phrases ever appeared in the training set.

Method

CLIP-Fields is based off of a series of simple ideas:

- Webscale models like CLIP and Detic provide lots of semantic information about objects that can be used for robot tasks, but don't encode spatial qualities of this information.

- NeRF-like approaches, on the other hand, have recently shown that they can capture very detailed scene information.

- We can combine these two, using a novel contrastive loss in order to capture scene-specific embeddings. We supervise multiple "heads," including object detection and CLIP, based on these webscale vision models, which allows us to do open-vocabulary queries at test time.

We collect our real world data using an iPhone 13 Pro, whose LiDAR scanner gives us RGB-D and odometry information, which we use to establish a pixel-to-real world coordinate correspondense.

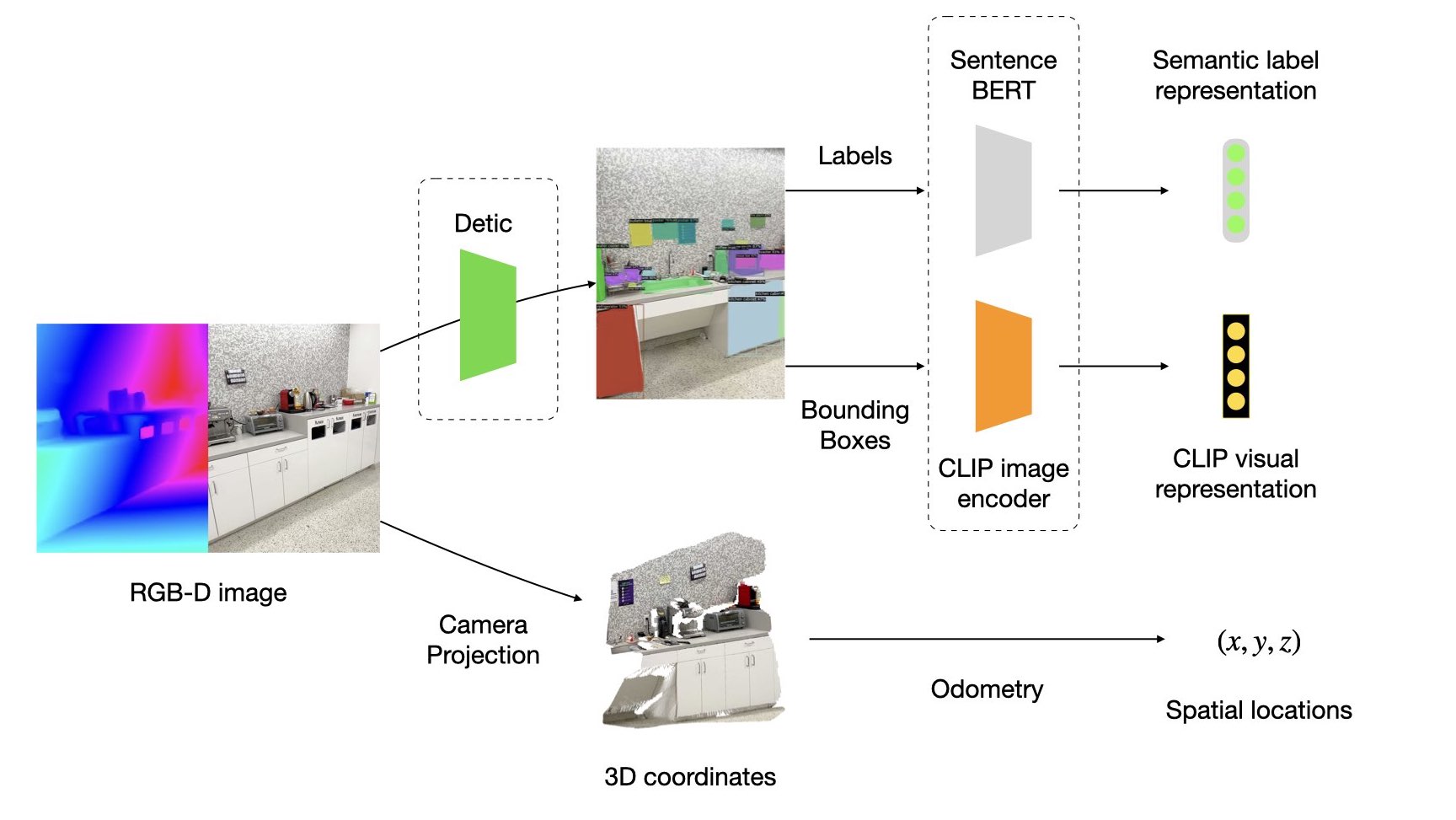

We use pre-trained models such as Detic and LSeg to extract the open-label semantic annotations from the RGB images, and use the labels to get Sentence-BERT encoding, and proposed bounding boxes to get CLIP visual encoding. Note that we need to use no human labelling at all for training our models, and all of our supervision comes from pre-trained web-scale language models or VLMs.

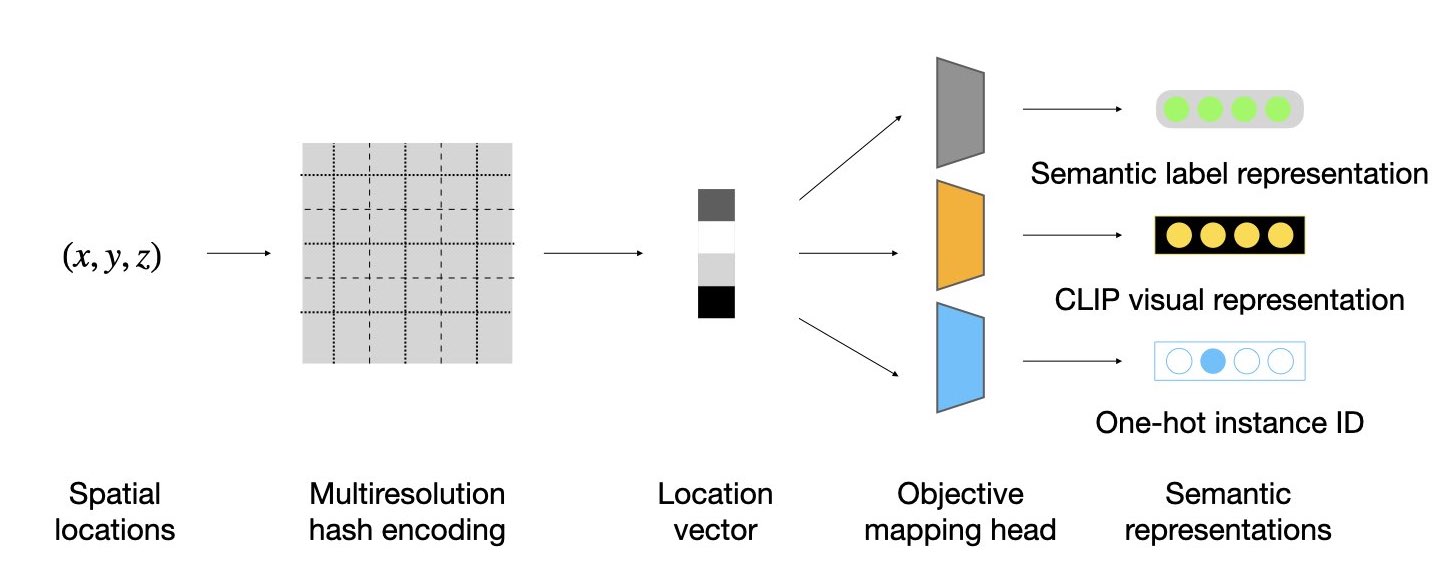

Our model is an implicit function that maps each 3-dimensional spatial location to a higher dimensional representation vector. Each of the vectors contain both the language-based and vision-based semantic embeddings of the content of location (x, y, z).

The trunk of our model is an instant neural graphics primitive based hash-grid architecture as our scene representation, and use MLPs to map them to higher dimensions that match the output dimension for embedding models such as Sentence-BERT or CLIP.

We train with a contrastive loss that pushes the model's learned embeddings to be close to similar embeddings in the labeled datasets and far away from dissimilar embeddings. The contrastive training also helps us denoise the (sometimes) noisy labels given by the training models.

Experiments

On our robot, we load the CLIP-Field to help with the localization and navigation of the robot. When the robot gets a new text query, we first convert it to a representation vector by encoding it with sentence-BERT and CLIP-text encoder. Then, we compare the representations with the representations of the XYZ coordinates in the scene and find the location in space maximizing their similarity. We use the robot’s navigation stack to navigate to that region, and point the robot camera to an XYZ coordinate where the dot product was highest. We consider the navigation task successful if the robot can navigate to and point the camera at an object that satisfies the query.

Future Work

We showed that CLIP-Fields can learn 3D semantic scene representations from little or no labeled data, relying on weakly-supervised web-image trained models, and that we can use these model in order to perform a simple “look-at” task. CLIP-Fields allow us to answer queries of varying levels of complexity. We expect this kind of 3D representation to be generally useful for robotics. For example, it ma be enriched with affordances for planning; the geometric database can be readily combined with end-to-end differentiable planners. In future work, we also hope to explore models that share parameters across scenes, and can handle dynamic scenes and objects.